A couple years ago, Harvard professor Francesca Gino was accused of faking data,…

Mistaken Data

Hm. That does not look right.

-

Professor who studied honesty loses tenure over faked data

-

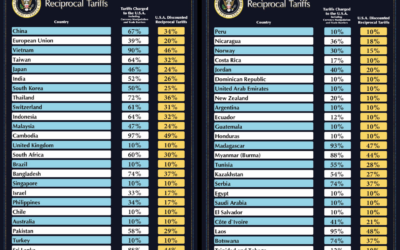

Simplified tariff calculations, ambiguously reciprocal

One might assume decisions that swing economies worldwide were based on careful research…

-

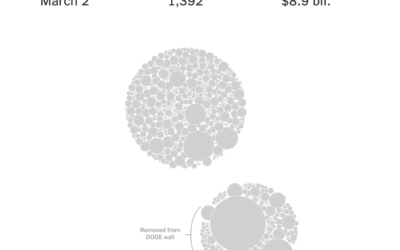

Wall of receipts is wall of inconsistent records

The “Department of Government Efficiency” keeps a “Wall of Receipts” to signal transparency…

-

Living 82-year-old Social Security recipient erroneously marked as dead

Pam Johnson got an email from her bank about her husband’s death. The…

-

$8 billion was actually $8 million in DOGE mistake

The DOGE site has a “wall of receipts” that claims $55 billion in…

-

150-year-olds probably not receiving Social Security benefits

The Department of Government Efficiency claims data in the Social Security Administration database…

-

Multiplication mistake leads to exaggerated plastic cautions

There was a brouhaha a couple months ago over research that suggested black…

-

Macy’s lost track of $154m in expenses because of poor metrics

For Bloomberg, Jeannette Neumann describes the accounting error:

For years, Macy’s Inc. touted… -

Flawed Rotten Tomatoes ratings

Rotten Tomatoes aggregates movie reviews to spit out a freshness score for each…

-

Supermarket provides AI-driven meal planner and is disappointed by the internet using it to output weird recipes

A supermarket chain in New Zealand offered an AI-based recipe generator, and of…

-

Honesty research likely faked data

Research by Dan Ariely and Francesca Gino suggested that people were more honest…

-

Bias in AI-generated images

Lensa is an app that lets you retouch photos, and it recently added…

-

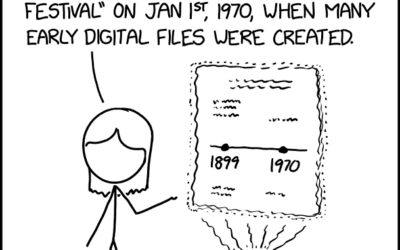

Historical data

Randall Munroe provides another fine observation through xkcd.

I often wonder what our… -

Google Maps incorrectly pointing people to crisis pregnancy centers

Davey Alba and Jack Gillum, for Bloomberg, found that Google Maps commonly points…

-

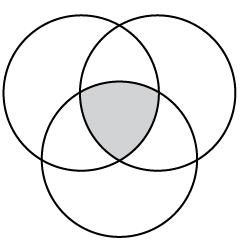

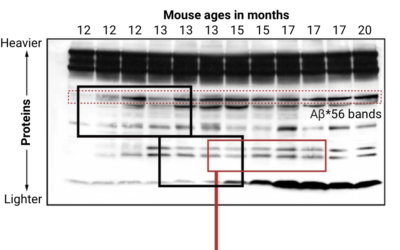

Looking for falsified images in Alzheimer’s study

Charles Piller, for Science, highlights the work of Matthew Schrag, who uses image…

-

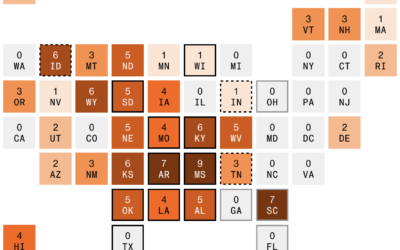

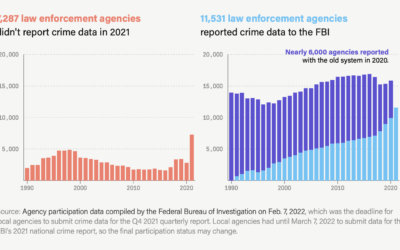

Unreliable FBI crime data

The Marshall Project and Axios report that the FBI changed their reporting system…

-

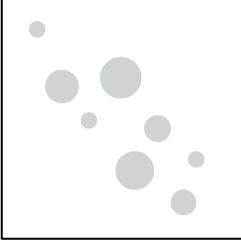

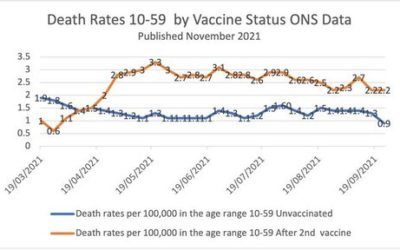

Simpson’s Paradox in vaccination data

This chart, made by someone who is against vaccinations, shows a higher mortality…

-

Scientists with bad data

Tim Harford warns against bad data in science:

Some frauds seem comical. In… -

‘Less than 10 percent’ outdoors

The CDC said that “less than 10 percent” of coronavirus cases were from…

-

Excel spreadsheet limit leads to 16,000 Covid-19 cases left off daily count

Microsoft Excel is useful for many things, but it has its limitations (like…

Visualize This: The FlowingData Guide to Design, Visualization, and Statistics (2nd Edition)

Visualize This: The FlowingData Guide to Design, Visualization, and Statistics (2nd Edition)