It continues to get easier to take someone’s face and put that person…

Statistics

More than mean, median, and mode.

-

Regulating deepfakes

-

OpenAI previews voice synthesis

OpenAI previewed Voice Engine, a model to generate voices that mimic, using just…

-

Examining the dataset driving machine learning systems

For Knowing Machines, an ongoing research project that examines the innards of machine…

-

National identity stereotypes through generative AI

For Rest of World, Victoria Turk breaks down bias in generative AI in…

-

Flipbook Experiment, like the Telephone game but visual

This looks fun. The Pudding is running an experiment that functions like a…

-

Language-based AI to chat with her dead husband

For the past few years, Laurie Anderson has been using an AI chatbot…

-

Love: math or magic?

This American Life tells the tales as old as time:

When it comes… -

DNA face to facial recognition in attempt to find suspect

In an effort to find a suspect in a 1990 murder, there was…

-

Collection of NBA basketball data sources and apps

If you’re into basketball data, Sravan Pannala is keeping a running list of…

-

Coin flips might tend towards the same side they started

The classic coin flip is treated as a fair way to make decisions,…

-

AI-based things in 2023

There were many AI-based things in 2023. Simon Willison outlined what we learned…

-

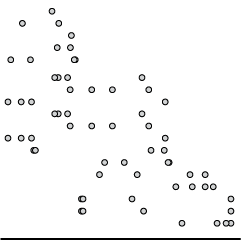

Estimating the size of YouTube

YouTube doesn’t offer numbers for how big they are, so Ethan Zuckerman and…

-

Building fair algorithms

Emma Pierson and Kowe Kadoma, for Fred Hutchinson Cancer Center, have a short…

-

Data on net worth, income, and savings

Data for the 2022 Survey of Consumer Finances, from the Federal Reserve Board,…

-

When Mike Breen announces “Bang!”

Mike Breen is a well-known NBA basketball announcer. When a player hits a…

-

Nobel Prize for research in global labor markets, using historical data

Claudia Goldin, an economist at Harvard, has won the Nobel Prize in Economics.…

-

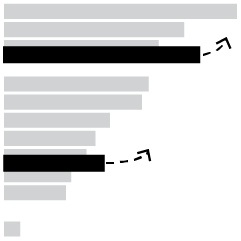

News organizations blocking OpenAI

Ben Welsh has a running list of the news organizations blocking OpenAI crawlers:…

-

Crows might understand probabilities

Researchers at the University of Tübingen are studying crows’ abilities to understand statistical…

-

Tattoos and impulsiveness dataset

For their research on tattoos and choice, Bradley Ruffle and Anne Wilson provide…

-

Flawed Rotten Tomatoes ratings

Rotten Tomatoes aggregates movie reviews to spit out a freshness score for each…

Visualize This: The FlowingData Guide to Design, Visualization, and Statistics

Visualize This: The FlowingData Guide to Design, Visualization, and Statistics