Eric Katz reporting for Government Executive:

Staffing at the National Weather Service will…

News

Conferences, workshops, contests, and the like.

-

Nominee to lead NOAA prioritizes hiring people

-

Nate Silver on Disney’s 538

Nate Silver writes a few thoughts on the closing of FiveThirtyEight:

For more… -

FiveThirtyEight is shutting down completely

Disney is cutting news jobs, and FiveThirtyEight, which was absorbed by ABC News…

-

Census.gov is down

As of Friday, January 31, 2025 at 3:26pm PST, the U.S. Census Bureau…

-

Chartr was acquired by Sherwood Media

I missed this announcement at the end of last year:

Sherwood Media, LLC… -

An open-access journal for visualization research

In an effort to provide a more transparent process in visualization and interaction…

-

Mona Chalabi wins Pulitzer for data illustrations

Mona Chalabi, known around these parts for her illustrative approach to data journalism,…

-

FiveThirtyEight layoffs

Disney began more layoffs, and data-centric FiveThirtyEight, which is owned by Disney, was…

-

Unemployed data scientist

It seems a lot of data scientists have either left or were laid…

-

House raided of fired Florida data manager in charged of Covid-19 dashboard

Jeffrey Schweers for Tallahassee Democrat:

State police brandishing firearms Monday raided the Tallahassee… -

Seems relevant

Just looking back at the past eight or nine months of coronavirus posts…

-

Salesforce to acquire Tableau

From Tableau CEO Adam Selipsky:

In 2003, Tableau set out to pioneer self-service… -

Giorgia Lupi joins Pentagram

Giorgia Lupi, whose work exemplifies the use of data and visualization outside of…

-

DataKind receives $20M grant to expand on data for social good

DataKind, the organization known for helping others use data for social good, received…

-

Amanda Cox promoted to New York Times data editor

Amanda Cox is the new data editor for The New York Times:

As… -

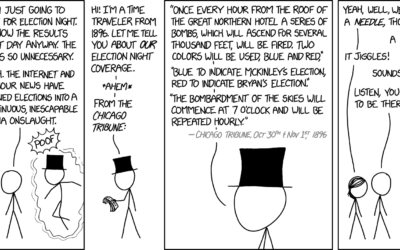

xkcd and the needle of probability

xkcd referenced the ever-so-loved forecasting needle. I’m so not gonna look at it…

-

The Markup is a new journalism venture to examine technology through data

Founded by Sue Gardner, the former head of the Wikimedia Foundation and Julia…

-

Census Bureau budget cuts

The unexpected resignation of Census director John H. Thompson was likely related to…

-

Census director resigns

The U.S. Census director John H. Thompson resigned and will leave June 30.…

-

Participate in the Data Visualization Community Survey

Elijah Meeks is running a detailed survey about data visualization people to gain…

Visualize This: The FlowingData Guide to Design, Visualization, and Statistics (2nd Edition)

Visualize This: The FlowingData Guide to Design, Visualization, and Statistics (2nd Edition)